|

Advertisement / Annons: |

Astronomy CalculationsContent: |

Bit Resolution Calculator |

|

|

In old time memories in the computers were expensive. You did everything to keep the need of memory at minimum. Nowadays memories are cheap. When doing image processing on astronomy photos you don't want the data be truncated when you do calculation on them. On example, the average value of 11 and 12 is 11.5, when storing it in a 8-bit format you lose the 0.5 part. Here are information about some common formats:

Color depth: But how big bit-depth do we need? Here I have made a calculator that could be of help: Old astro image processing software like the popular and advanced Iris use signed 16-bit, gives +/- 32000 range. Internally it used 32-bit. Nowadays most software can read and store in 32-bits floating point format. Of this 24-bits are used for the base, the rest, 8-bit is used for the exponent. When I did something more advanced in the 1990s I used Matlab which use 64-bits format in its calculation. But what is the demand of bit-resolution when doing astro image processing?

Note: |

|

Type in your dataIt start to calculate as soon you change or write new figures in the white or dark red boxes. Do not exceed the maximum number of characters, delete characters if necessary.

Note: |

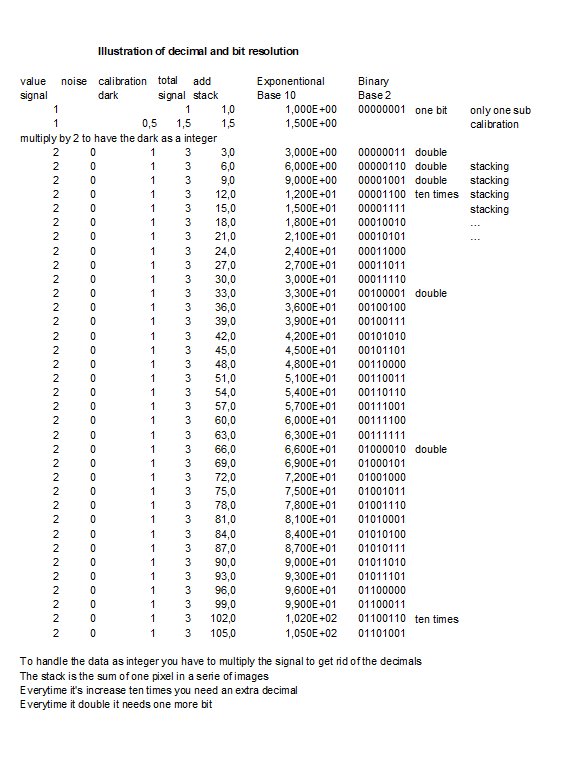

Illustration of bit resolution:I think there is a need of an illustration of what happens, even for me.

In my calculations I only work with integers to make it easier to follow. When you get rounding errors like 0.5 you have to multiply by 2 to get it back in integer. This double the value and every time you do that you need an extra bit. With stacking, in this case adding them together you need one more extra bit every time the value doubles. There are situations that are not correct handled, see it like a development project. Information:My normal values:

* With that input data gives an indication that I need a 35-bit format, 3 bits more then the standard 32-bit floating point. But as said in the beginning, this is in theory, you have a lot of noise that mask the rounding errors. 32-bit floating points format will handle all normal cases. I normally use the AstroImageJ or Fitswork software, both of them handle 32-bit floating point, here is more information: My Software and equipment My camera is a Canon 6D and the raw files from it I store as they are .CR2, sometimes if needed I store them as 16-bit fits, .fts or 16-bit TIFF, .tif. Both unsigned integer. There are more thing, most cameras are nonlinear, to correct for that need some extra precision. Distortion in optics can be corrected too, even this need some extra precision. After feedback from other people I have added more bits for flat calibration. It was very interesting to do this calculation, I have just always thought that 32-bit floating point will be enough with a huge margin. Maybe in some extreme rare cases it's not so. It tells me that it could be interesting to do a test with a software that can handle the 64-bit floating point standard. To get any meaning form it I need very high quality images. In future we maybe will see CMOS cameras with multiple readout, when doing this with different gains you get a HDR image. 16-bit or maybe even 18-bit direct out from the camera.

|

| Go Back |